OptiStructures: Fabrication of Room-Scale Interactive Structures with Embedded Fiber Bragg Grating Optical Sensors and Displays

abstract

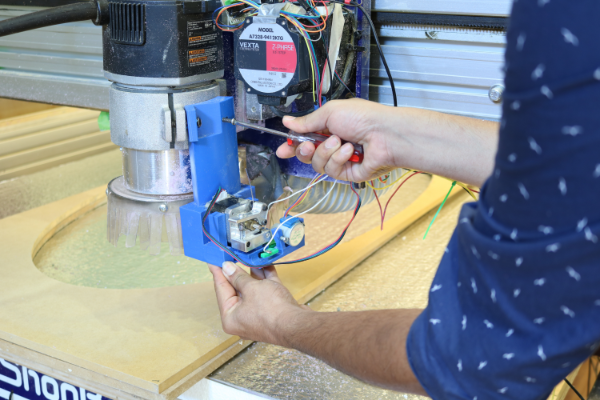

A recent topic of considerable interest in the "smart building" community involves building interactive devices using sensors and rapidly creating these objects using new fabrication methods. However, much of this work has been done at what might be called hand scale, with less attention paid to larger objects and structures (at furniture or room scales) despite the fact that we are very often literally surrounded by such objects.

In this work, we present a new set of techniques for creating interactive objects at these scales. We demonstrate the fabrication of both input sensors and displays directly into cast materials—those formed from a liquid or paste which solidifies in a mold; including, for example: concrete, plaster, polymer resins, and composites. Through our novel set of sensing and fabrication techniques, we enable human activity recognition at room-scale and across a variety of materials. Our techniques create objects that appear the same as typical passive objects, but contain internal fiber optics for both input sensing and simple displays.

reference

Saiganesh Swaminathan, Jonathon Fagert, Michael Rivera, Andrew Cao, Gierad Laput, Hae Young Noh, and Scott E. Hudson. 2020. OptiStructures: Fabrication of Room-Scale Interactive Structures with Embedded Fiber Bragg Grating Optical Sensors and Displays. Proc. ACM Interact. Mob. Wearable Ubiquitous Technol. 4, 2. https://doi.org/10.1145/3397310